AI has grown exponentially, driven by larger datasets, increased computational power, and improved architectures.

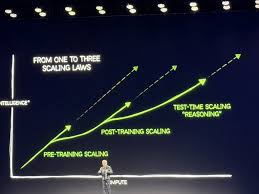

The concept of AI Scaling Laws, pioneered by OpenAI and DeepMind, suggests that increasing model size, data, and compute resources leads to predictable performance improvements.

The Relationship Between Model Size, Compute, and Data

Scaling laws indicate that AI performance improves logarithmically with more training data and model parameters. Large Language Models (LLMs) like GPT-4 and Claude leverage billions of parameters and vast datasets to achieve human-like reasoning capabilities. However, diminishing returns and increasing energy consumption pose challenges to continuous scaling.

The Future of AI Scaling

While scaling has driven major breakthroughs, future progress may rely on more efficient architectures, such as sparse models, low-rank adaptations, and neuromorphic computing. AI developers are also exploring self-learning algorithms that require fewer labeled examples, reducing dependence on massive datasets.

Leave a Reply