AI infrastructure includes high-performance computing (HPC) environments, cloud-based architectures, and modern data storage solutions. Traditional computing systems struggle to handle the vast amounts of data and complex computations required for AI, which is why GPUs (Graphics Processing Units) and TPUs (Tensor Processing Units) have become essential. These specialized processors accelerate deep learning tasks, enabling real-time AI applications such as autonomous vehicles, recommendation systems, and conversational AI.

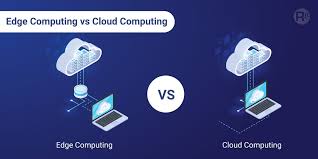

Cloud computing has revolutionized AI infrastructure by providing on-demand scalability and cost-efficiency. Platforms like AWS, Google Cloud, and Microsoft Azure offer specialized AI services, including machine learning models as a service, AI-powered APIs, and cloud-based data processing tools. With serverless computing, developers can focus on building AI models without worrying about infrastructure management. Hybrid cloud and edge computing are gaining traction, allowing AI models to process data closer to the source rather than relying solely on centralized cloud resources. This is particularly important for IoT applications, autonomous systems, and real-time decision-making in industries like healthcare and finance.

In addition, containerization technologies such as Docker and Kubernetes are streamlining AI deployment by allowing applications to be packaged with all dependencies. This ensures seamless execution across different environments. MLOps (Machine Learning Operations) is another crucial aspect of AI infrastructure, integrating DevOps practices into AI workflows to enable continuous integration, deployment, and monitoring of AI models. These advancements make AI systems more resilient, scalable, and cost-effective, enabling businesses to leverage AI without extensive in-house infrastructure investments.

Leave a Reply